The Anatomy of Modern Information Control

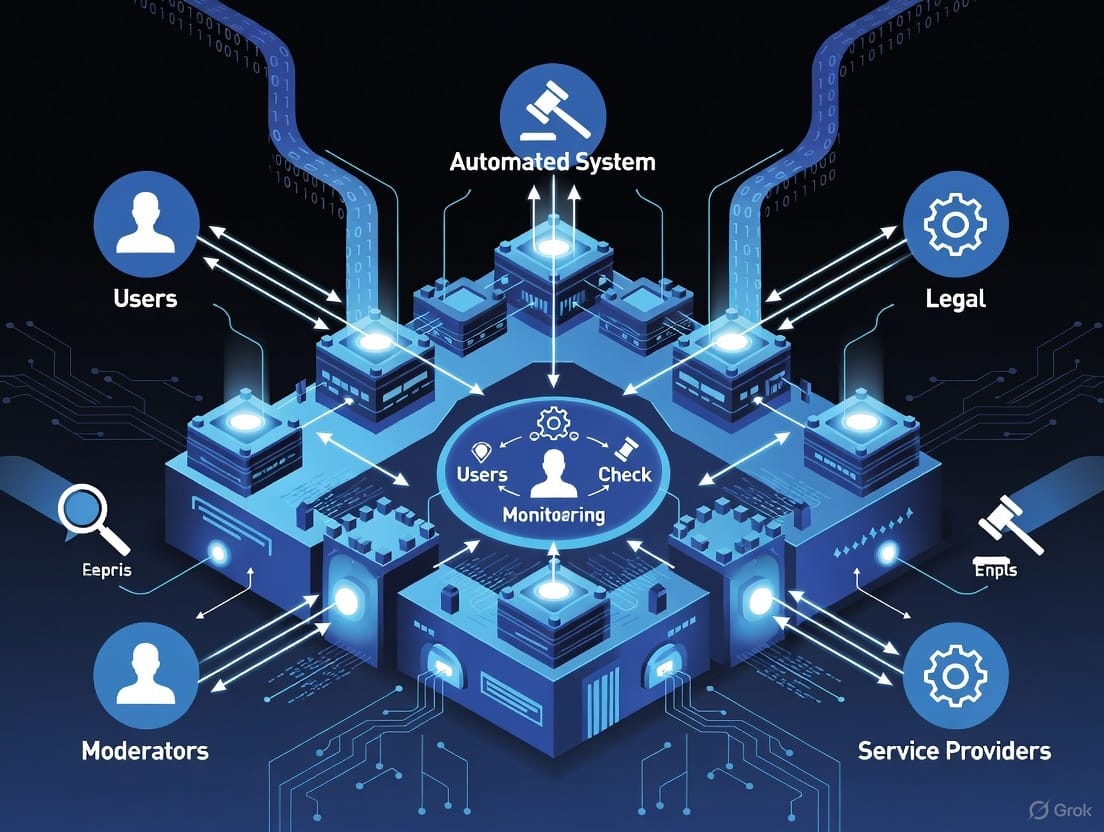

A highly scalable, self-reinforcing censorship system has emerged worldwide that operates through five interdependent actor groups and a four-stage cycle of monitoring, verification, amplification, and enforcement—all while presenting itself as mere protection against harm.

In every society that has embraced digital communication, a remarkably similar architecture has emerged to manage what people are allowed to see, share, and believe. It rarely announces itself as censorship. Instead, it presents as “trust and safety”, “election integrity”, “countering foreign interference” or “protection from harmful content.” Beneath the different flags and acronyms, the operating model is almost identical worldwide. Understanding its mechanics as a system—rather than as a local political fight—is the first step to recognizing it wherever it appears.

How the Machine Works

The system runs on a closed, self-reinforcing loop with four repeating phases:

- Continuous Monitoring & Flagging

Specialized monitoring units—often mixtures of contractors, algorithms, and partnered organizations—scan platforms in real time for content that violates vaguely worded policies (“inauthentic behavior”, “coordinated harm”, “manipulated media,” etc.). The definitions are kept broad on purpose; elasticity is a feature, not a bug. - Verification by Aligned Networks

Flagged content is handed to a tier of trusted third-party organizations (fact-checking networks, academic centers, specialized NGOs) whose funding and partnerships tie back—directly or indirectly—to the same authorities that wrote the rules. These groups rarely limit themselves to clear-cut hoaxes; they increasingly rate opinion, tone, and selective framing as “false” or “misleading.” - Amplification of the Authorized Narrative

Once a verdict is issued, subsidized media partners, influencers, and advertising campaigns flood the information space with the approved version. The original post may be down-ranked, labeled, or removed, while counter-narratives are boosted by algorithms that have already been tuned to favor “authoritative sources.” - Enforcement & Deterrence

Platforms are held liable unless they act quickly. The threat of fines, loss of legal immunity, or outright bans forces rapid compliance. Over time, creators and publishers internalize the boundaries and self-censor long before any flag is raised. The loop then feeds fresh data back into phase 1, training the next generation of filters.

The beauty of the design—from the architects’ perspective—is that no single entity needs to issue direct orders. Pressure is distributed, responsibility is diffused, and every participant can claim to be acting independently.

The Five Categories of Actors

(Names Change, Roles Stay the Same)

Strip away the logos and you always find the same five functional groups working in concert:

- The Rule-Setters

Supranational bodies, national regulators, or public-private “multi-stakeholder” initiatives that create the legal and policy framework. They define what counts as harm and grant themselves emergency powers to expand the definition when needed. - The Professional Verifiers

Networks of fact-checking organizations and “disinformation research” centers whose grants, salaries, and access depend on producing outputs that align with the Rule-Setters’ priorities. - The Narrative Amplifiers

Legacy media, digital news startups, and content creators who receive direct or indirect funding (grants, advertising credits, subsidized distribution) to promote the verified line and marginalize everything else. - The Civil-Society Proxies

Thousands of NGOs, activist coalitions, and “digital rights” groups that campaign for ever-stricter rules while presenting themselves as grassroots defenders of openness. Their budgets often dwarf those of the organizations they claim to oversee. - The Academic Legitimizers

University departments, think tanks, and research institutes that produce studies, lexicons, and “scientific” frameworks giving the whole apparatus an aura of objectivity. Their funding loops back to the same sources.

Because money, data, and personnel flow between these five layers through revolving doors and shared initiatives, the system maintains coherence without ever needing a single written master plan.

Why It Scales So Effectively

- It weaponizes noble intentions: who could oppose stopping child exploitation imagery, terrorist propaganda, or election sabotage?

- It externalizes the blame: foreign adversaries are always the stated target, even when domestic critics are the ones silenced.

- It delegates the dirty work: no government has to censor directly; it simply threatens platforms until private companies do it for them.

- It creates a permanent crisis mentality: every election, pandemic, or protest becomes justification for new emergency powers that quietly become permanent.

Recognizing the Pattern

Next time you see a wave of synchronized takedowns, identical talking points across outlets that never agree on anything else, or a new “independent” institute launched with fanfare to combat “threats to democracy,” don’t ask first who is being targeted today. Ask instead which of the five roles each actor is playing—and who is funding the orchestra.

The machinery is modular, portable, and constantly refining itself. Once you can spot the blueprint, you’ll see it operating far beyond any one region or ideology. Knowledge of the model is the only real defense against it.

Because the most effective control systems are the ones most people still believe are protecting them.